Telegram founder Pavel Durov is making a serious accusation against European governments. He claims EU and UK authorities secretly offer social media CEOs deals to suppress dissent — and use child protection as cover when those CEOs say no.

Elon Musk publicly agreed with him. And the timing couldn’t be more loaded.

Secret Deals and Criminal Charges

Durov laid out what he calls a clear pattern across European governments. First, he says, authorities approach platform executives with informal, off-the-record agreements to restrict certain content. Refuse the deal, and criminal proceedings follow — almost always justified under child safety laws.

“When people push back, say it’s ‘all for the children,'” Durov wrote. “‘Protecting children’ has become the standard legal/PR cover.”

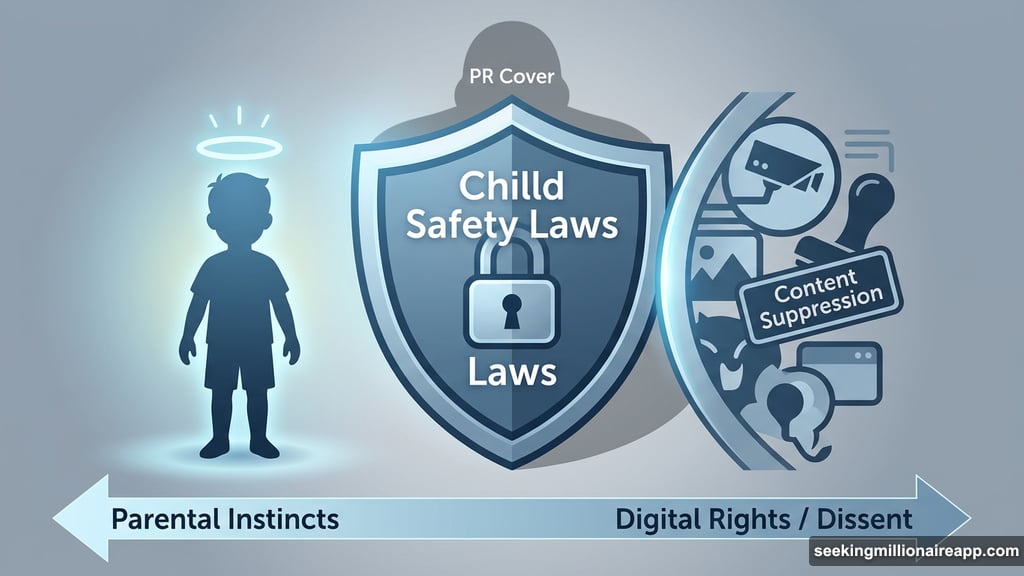

His argument goes further than just politics. Durov says child safety rhetoric deliberately exploits parental instincts to shut down any serious conversation about surveillance and digital rights. By framing censorship as child protection, he argues, regulators make it nearly impossible to push back without looking like you’re defending harm.

It’s a bold claim. But Durov has personal skin in this game.

Durov Knows This Pattern Firsthand

This isn’t abstract theory for Durov. French authorities arrested him at a Paris airport in August 2024 and hit him with 12 charges, including alleged complicity in distributing child exploitation material. His travel ban was lifted in November 2025, though the investigation is still ongoing.

He recently revealed he faces more than a dozen charges, each carrying up to 10 years in prison.

Musk weighed in to support Durov’s criticism. He also dismissed the separate French investigation into X as a “political attack.” That probe centers on allegations that X facilitated child abuse material and deepfakes — and French prosecutors summoned Musk for a voluntary interview on the same day Durov made his posts.

The US Department of Justice rejected France’s request for assistance in the X investigation, calling it an attempt to “entangle the United States in a politically charged criminal proceeding.” That’s a pretty direct rebuke.

What’s Happening in the UK

The Durov-Musk exchange didn’t happen in a vacuum. On April 16, UK Prime Minister Keir Starmer summoned executives from X, Meta, Snap, YouTube, and TikTok to Downing Street for a direct conversation about children online.

Starmer’s message was blunt: banning children from social media platforms would be “preferable to a world where harm is the price” for their use.

“I know parents are worried about social media and its impact on their children’s safety,” Starmer said. “I will do whatever it takes to keep children safe online.”

On the surface, that sounds hard to argue with. But critics like Durov see a different subtext — platforms that don’t cooperate with government content expectations getting squeezed through regulatory pressure dressed up as protecting kids.

Two Very Real Concerns at Once

Here’s where this gets genuinely complicated. Both things can be true at the same time.

Children do face real harm online. Exploitation material, dangerous content, and predatory behavior are documented problems that platforms have historically been slow to address. Regulators pushing back on that isn’t inherently sinister.

But Durov’s underlying point — that “child safety” has become a flexible legal tool governments can aim at any platform that hosts inconvenient political speech — deserves serious scrutiny too. The fact that the US DOJ called the French request politically charged suggests this concern isn’t just coming from tech billionaires with obvious self-interest.

Where This Goes From Here

France’s investigation into X is still moving forward. Durov’s own case continues alongside it. Neither will resolve quickly, and both will keep this debate firmly in the spotlight.

What Durov and Musk represent in this fight matters beyond just their personal legal situations. They’re the loudest voices arguing that European regulators have found a politically bulletproof framing — child safety — to pressure social media platforms into compliance. And they’re using their own platforms to say it loudly.

Whether that framing holds up will depend on how courts, governments, and the public weigh two genuinely competing priorities: protecting children online and protecting speech from regulatory overreach. Right now, both sides are digging in, and neither investigation is close to finished.